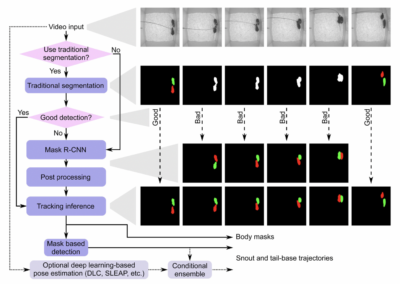

Researchers from the Mathis Group & Mathis Lab at EPFL/Harvard have collaboratively developed DeepLabCut, an open source software package for markerless pose estimation. This software features a robust and fast algorithm for transfer learning with deep neural networks which allows for pose estimation with minimal training data from pre-recorded video files. The graphical user interface and documentation for this project make it straightforward for users without extensive coding experience and has been applied to video datasets of multiple species, including those commonly studied in neuroscience research labs. DeepLabCut ahs been implemented in a number of other open source paradigms for non-invasive behavior tracking, including projects like B-SoID, Stytra, and Kinemouse Wheel. We would *highly* encourage anyone interested in video tracking, particularly markerless tracking of subjects in behavioral paradigms to check out the DeepLabCut website, as their documentation, how-to guides, example applications, and descriptions of their software are quite informative. Here you will also find up to date information about their DeepLabCut related projects, including a DLC blog and the Model Zoo, which contains pre-trained models for different species, including Cute Cats, aDorable Dogs, and perhaps more relevant for neuroscience, Mighty Mice. Furhtermore, for more technical details about the computational power of the DeepLabCut algorithm, be sure to check out their GitHub repository (specifically the “Why Use DeepLabCut?” portion). This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. Project portal RRID:SCR_021391; Software RRID:SCR_021398 Check out the DeepLabCut website for the latest updates on this project, and learn more about related initiatives including the DLC Model Zoo and blog! Read more about DeepLabCut from the original Nature Neuroscience publication from 2018. In 2018, Dr. Mackenzie Mathis shared with OpenBehavior some of her experiences developing and sharing DLC as an open source project. Read about it here! Check out the DeepLabCut GitHub! Check out projects similar to this!DeepLabCut

Read the Documentation

DeepLabCut Publication

Development Interview with Dr. Mackenzie Mathis

DeepLabCut GitHub

Have questions? Send us an email!