JARVIS

Video analysis and tracking methods have become increasingly important in behavioral neuroscience research. Although several tools have been highly developed for diverse use, most of the improvements have been made for tracking in 2D images. Timo Hueser and colleagues have developed JARVIS, a toolchain to analyze 3D motion tracking from multi-camera systems.

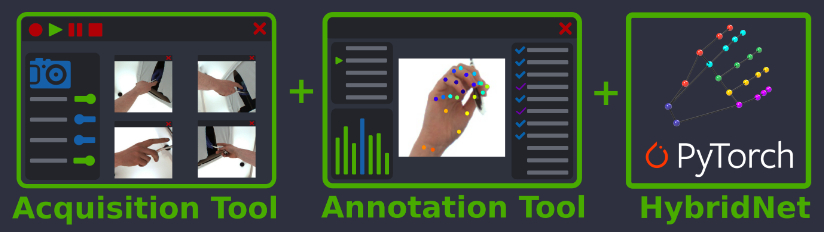

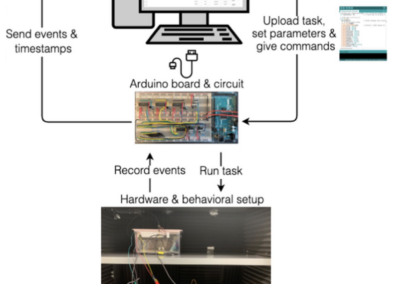

JARVIS is a three-part system. The first is an acquisition tool for synchronized recording from multiple cameras. The group has listed the camera models that are compatible with the acquisition tool with no modifications. The second part is an annotation tool that reduces the amount of manual annotations that are typically required. Finally, their 3D pose estimation architecture, HybridNet Pytorch Library, combines 2D and 3D CNN architecture for faster and more precise training.

This system can be used with various species, including human, non-human primates, rats, and mice. The group is currently working on building a database for each model to provide examples and instruction for how to get started with JARVIS. Additionally, they are working on a complete user’s manual for implementation of JARVIS in unique experimental designs. All downloads can be accessed from GitHub.

This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. RRID:SCR_022723

Documentation

Access the user manual, model database, and more!

GitHub site

Download necessary tools an software from the source!

Check out projects similar to this!