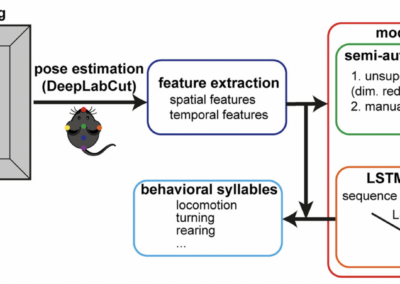

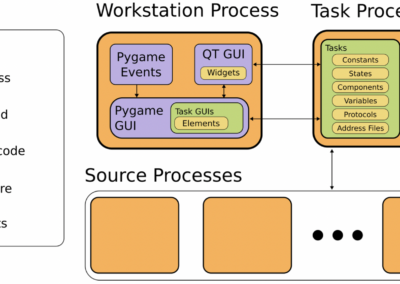

Markus Marks from ETH Zurich contributed this post on a new method for video analysis of multiple animals in complex real-world settings. This is an independently developed approach that handles all aspects of video analysis including segmentation, identification, and pose estimation. The analysis pipeline was developed in collaboration with Johannes Bohacek and others in the Institute for Neuroscience at ETH Zurich. SIPEC is a recently developed deep-learning-based analysis pipeline for the behavior of multiple animals in complex environments. The core module is a neural network that performs action recognition of individual animals or groups of animals from raw-video frames, i.e., an animal’s behavior (running, rearing, grooming, etc.) is classified for each frame of the video. Furthermore, SIPEC can perform segmentation, identification, and pose estimation of individuals or interacting animals. A specialized neural network is used to perform each task. The pipeline enables the flow of information between the neural networks and the aggregation of results and visualization. SIPEC was developed using python with Keras/TensorFlow as the deep-learning backend. Labels for training the networks are generated using the VGG image and video annotator tools and a custom GUI. Pre-trained neural networks are available that can be re-used or re-trained using custom labels. The pipeline has been applied to videos of individual mice in an open field test arena and primates in a complex home-cage environment with changing backgrounds, lightning, etc. The code is available at https://github.com/SIPEC. The installation is straightforward using conda/pip or direct usage via docker. For training the neural networks, a GPU (Nvidia environment) is necessary. For inference, a regular PC can be used. The development team provides support for users and asks that interested users contact them via email or GitHub. This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. RRID:SCR_022364 Learn more about using SIPEC for multiple monkey tracking from bioRxiv. Get access to the SIPEC software from GitHub!SIPEC

Read the Paper

SIPEC GitHub

Have questions? Send us an email!