BehaviorDEPOT

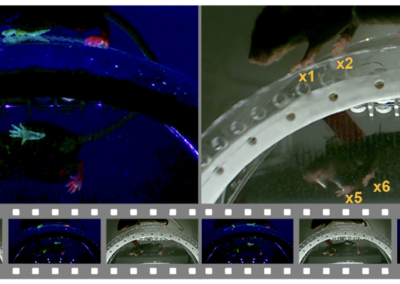

Advancements in machine learning methods for video analysis have been a crucial development for understanding the neural underpinnings of freely-moving behaviors. Many of these methods emphasize pose estimation and tracking, but options for classifying specific behaviors (such as grooming, freezing, etc.) are more limited by comparison. To address this, especially in a way that’s accessible to the broader behavioral neuroscience community, Christopher Gabriel and colleagues developed and shared BehaviorDEPOT. This software program detects frame by frame behavior from video time series and can analyze the results of common experimental assays, including fear conditioning, decision-making in a T-maze, open field, elevated plus maze, and novel object exploration. It is also capable of adapting to other experimental assays, and the graphical user interface keeps the power of the software accessible to users without coding experience. BehaviorDEPOT calculates kinematic and postural statistics from keypoint tracking data from pose estimation software outputs (DeepLabCut is featured throughout the manuscript, but authors note SLEAP can be used with a conversion script available on GitHub) and creates heuristics that reliably detect behaviors. Results are stored frame by frame which allows for alignment to neural data. BehaviorDEPOT comprises six independent modules that run via MATLAB which allows users to create flexible pipelines to fit their experimental and analysis needs, the details of which are available in their eLife publication. Additionally, the publication provides validated results from a number of experimental designs, including tethered and non-tethered rodent examples. BehaviorDEPOT is available to install from GitHub and the Wiki page provides helpful information about its use and applications.

This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. RRID:SCR_023602

Access BehaviorDEPOT from GitHub!

Check out the repository on GitHub.

Read more about it!

Check out more about the development and validation of this software from the eLife publication!