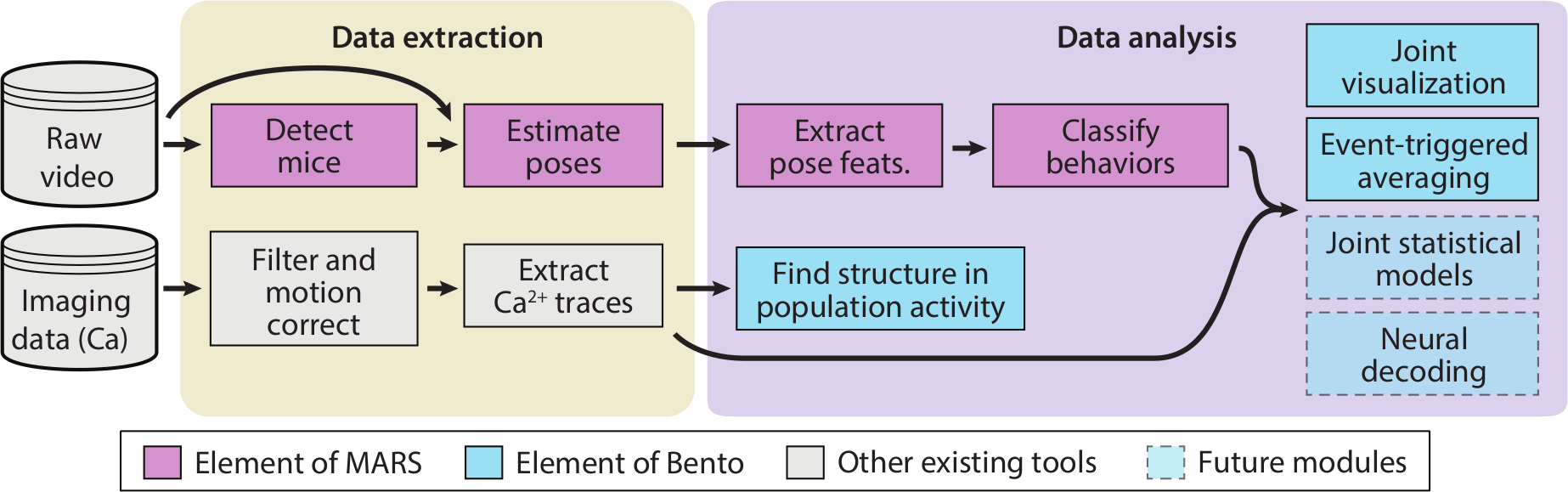

, from , contributed this post on a series of tools for joint analysis of behavioral and neural recording data: MARS, MARS-Developer, and BENTO. The (MARS) is a Python-based end-to-end pipeline for automated detection, pose estimation, and behavior classification in socially interacting mice. It was developed in a collaboration between the laboratories of Pietro Perona and David Anderson, by Cristina Segalin with Jalani Williams and Ann Kennedy. MARS was created to enable out-of-the-box classification of social behaviors in the resident-intruder assay, in unoperated or scope/cable-implanted animals of differing coat colors. The end-user version, available , comes pre-trained on a dataset of 15,000 hand-annotated pose labels and 7 hours of behavioral video. It processes behavior video in four steps: first, a detector network identifies the locations of both mice. Next, a pose estimation network describes the posture of both mice in terms of a set of seven anatomically defined keypoints (the nose, ears, base of neck, hips, and base of tail). After that, MARS extracts a set of over 100 biologically relevant features from the postures of the two animals, saving these to a file for further analysis. Finally, MARS uses these saved features to perform frame-by-frame annotation of the behavior video for attack, mounting, and close-investigation behaviors. Researchers who use our open-source behavior acquisition hardware can use MARS with no manual training steps, to automatically track and score their behavioral assays. Because not every lab wants to study resident-intruder behavior, we have also released Finally, the (BENTO) is a Matlab-based user interface for visualizing and analyzing multimodal neuroscience datasets. The core goal of BENTO is to sync up diverse data streams (including video, pose estimates, neural recordings, audio, and behavior annotations), making it easier to visually correlate an animal’s neural activity with its actions. BENTO also includes interfaces for saving video clips showing behavior side-by-side with neural activity, for manual annotation of new behaviors of interest, and for some common neural data analyses including PCA, k-means clustering, and event-triggered averaging. When combined with MARS, BENTO will display the MARS pose features, and includes an interface to apply thresholds to any combination of features to create annotations programmatically. Beyond using BENTO as a graphical interface, the All of these tools are free and open-source, and can be obtained via the

Get access to this collection of tools on Github. Read more about MARS from the recent preprint on bioRxiv!MARS, MARS-Developer, and BENTO

load_experiment function will read your data into a formatted Matlab struct which can be used as a starting point for your own downstream analysis. Finally, a Python version of BENTO is currently in development, which will additionally include a full SQL back end, allowing users to create and curate a database of their experiments and animals, with no coding required.GitHub Repository

Read the Paper

Have questions? Send us an email!