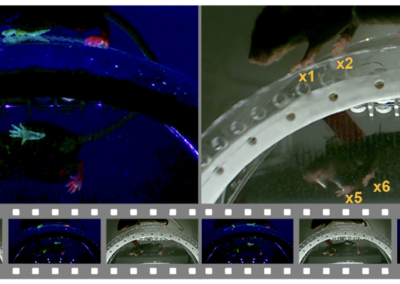

and at Princeton offers an interesting way to do video analysis in behavioral neuroscience applications. was published in . It works on videos (not poses) and uses image analysis and multivariate statistical methods (such as PCA, wavelets, and t-SNE). It has been used to find stereotyped behaviors in flies, mice, and other species. is a relatively fast method for pose estimation that uses deep neural networks. LEAP stands for LEAP Estimates Animal Pose. A paper on LEAP was published in . The lead developers were and . is the next generation of LEAP and allows for tracking multiple animals, thus the new name SLEAP, or Social LEAP. The lead developer is Talmo Pereira and a preprint is available on the framework on . We evaluated SLEAP using videos provided by the project and from our lab and the OB video repository. We found the API to be very well documented, the GUI for labeling is well developed and easy to use, and training runs quickly on the GPU servers from Colab. Analyses finished within a couple of hours, and there is an option for early stopping. Numerous are provided including for using SLEAP’s graphical user interface for labeling videos. Three features of the software stood out to us. First, the labeling process includes what the developers call “interactive labeling”, in which the GUI suggests new frames that need labeling based on the user’s choice among some statistical measures. Second, after tracking is performed, the interface allows for what the developers call “proofreading”, allowing for analyzing videos not used in training and to inspect and correct errors in the tracking or pose estimation in these videos. Third, SLEAP saves all results into an easily accessed h5 file and the results of an analysis can be shared as a .slp file. This feature seem excellent for reproducibility. If you are doing a lot of video tracking or pose estimation, it might be worth your time to check out SLEAP. This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. Project portal RRID:SCR_021383; SLEAP software RRID:SCR_021382; MotionMapper software RRID:SCR_021384 Get access to SLEAP files and instructions on Github. Read more about SLEAP in their Nature Methods paper!SLEAP, LEAP, and MotionMapper

GitHub Repository

Read the Paper

Have questions? Send us an email!