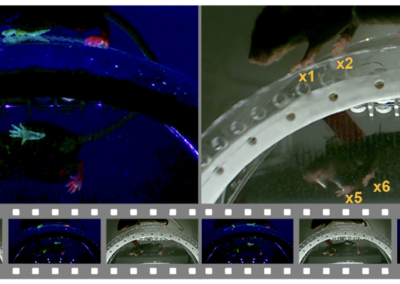

TRex is an open source animal tracking tool developed by Tristan Walker and Iain Couzin. In recent years, visual tracking of animal movement has become a valuable method for studying animal behavior. TRex seeks to improve upon current animal tracking softwares by providing a user-friendly tool that works anywhere from 2.5 times to 46.7 times faster and requires 2 times to 10 times less memory than other comparable software. TRex can track the movements of up to 256 individuals simultaneously, and does so in real-time. It also offers identification of up to 100 unmarked individual subjects. TRex uses background subtraction to track movement and performance of subjects, so the only requirement is that moving objects and individuals be distinguishable and separable from their background. Additionally, TRex can estimate 2D visual fields and outlines of individuals, and can estimate the head and rear of bilateral animals. It can even do so in both open and closed loop contexts. The relative performance and accuracy of TRex improves as the number of organisms studied increases and as video length increases. Given TRex’s capabilities, it can be used in a wide range of behavior studies. For instance, TRex can provide metrics for individual animals, such as body shape and head and tail positions. TRex can also provide insight into animals’ postures. For example, TRex can be used to trask courtship displays, which would not be understood from other positional data. Furthermore, TRex can be used to computationally reconstruct the visual fields of the individuals being studied. TRex is a platform-independent software and can be run on all major operating systems. TRex offers complete batch processing support, meaning that its users can quickly and effectively process sets of video data without human intervention. TRex can be accessed through settings files, from the graphical user interface (GUI), or through the command line. Code and documentation are available on GitHub and Docs pages, and more information about this project can be found in their eLife publication. This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. RRID:SCR_022361 Learn more about TRex, its implementation, and validation data from the eLife publication! Get access to the software, docs, and tutorials from the TRex Docs page! This post was brought to you by Sydney Cerveny. This project summary is a part of the collection from neuroscience undergraduate and graduate students in the Computational Methods course at American University.TRex

Read the Paper

TRex Documentation

Thanks, Sydney!

Have questions? Send us an email!