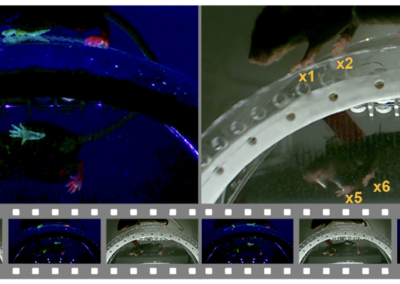

The emergence of pose-estimation tools for animal studies has provided a means of accurately tracking postural dynamics and locomotion in freely behaving animals. However, translating pose-data to dynamic behavioral states remains a challenge. Kevin Luxem and colleagues developed VAME, an unsupervised probabilistic deep learning framework capable of finding behavioral motifs in pose-estimation data. VAME’s deep learning framework, based in PyTorch, uses three bidirectional recurrent neural networks (a useful machine learning tool to process time-series data) to identify and model repeated postural expressions with high spatiotemporal accuracy. Additionally, data is passed through a variational autoencoder that can identify latent subject states. Installation can be done easily in an Anaconda environment via a locally cloneable GitHub directory. VAME’s function is two-fold. First, behavioral motifs can be extracted from postural data by identifying “re-used units of movement”. Second, these postural motifs are analyzed throughout the full-data series to determine transition patterns between postural states. VAME is fit to analyze animal pose-estimation data for a host of behaviors (grooming, licking, walking, rearing, social interaction, etc). VAME is a Python based open-sourced tool capable of augmenting quantitative behavioral analyses of data derived from standard pose-estimation software packages. It was written based on core functions from DeepLabCut, and readily works with pose data from that package. It can also work with data from other pose estimation packages such as SLEAP. This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. VAME software: SCR_022477. The full paper including extensive examples of the data the program has been able to identify can be found here! Get access to the software, docs, and tutorials from the Github page!VAME: Variational Embedding of Animal Motion

Read the VAME Paper

VAME Github site

Have questions? Send us an email!