LiftPose3D

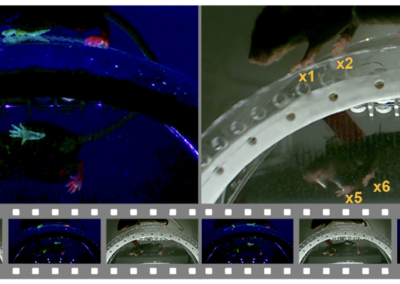

Several methods for tracking behavior in three dimensions have emerged in recent years. Two related packages are DeepFly3D (Gunel et al., 2019) and LiftPose3D (Gosztolai et al., 2021). They were developed by a research group headed by Pavan Ramdya in the Neuroengineering Laboratory at École polytechnique fédérale de Lausanne (EPFL). DeepFly3D, previously featured on OpenBehavior, was developed for 3D tracking of specific body part movements in tethered Drosophila. It uses a modified method for human pose estimation (Newell et al., 2016) and the PyTorch library to track body parts in the 2D plane and custom written code for triangulating the positions of body parts across multiple cameras. Software from DeepFly3D was later incorporated into LiftPose3D, which uses deep learning methods to enhance triangulation of tracking data over multiple cameras. The package was shown to work for tracking movements in three dimensions from behaving flies, mice, rats, and non-human primates. Extensive documentation is provided for both packages. Camera synchronization and calibration are crucial for successfully using 3D tracking methods. The packages, and their associated publications, include extensive routines for synchronization and calibration, and are worth reviewing if you are interested in 3D behavioral analysis.

This research tool was created by your colleagues. Please acknowledge the Principal Investigator, cite the article in which the tool was described, and include an RRID in the Materials and Methods of your future publications. LiftPose3D RRID:SCR_023049; DeepFly3D RRID:SCR_021402

LiftPose3D Documentation

Read the Nature Methods paper!

GitHub

Download the necessary files and check out the tutorials from the source!

More about DeepFly3D!

Read more about the DeepFly3D project and access relevant links from our 2020 OB Post!