Jul 25, 2025 | In the Community, OpenBehavior Team Update, Uncategorized, Video Analysis

The OpenBehavior team explored the use of three advanced video analysis tools in behavioral neuroscience during summer 2025. These tools are SLEAP for pose estimation, DeepEthogram for tracking and timing action sequences, and A-SOID for measuring movement dynamics...

Jul 18, 2025 | In the Community, OpenBehavior Team Update, Uncategorized

Over the past several months, we’ve highlighted open-source projects that have captured the interest of our followers, focusing on specific categories such as behavioral devices or video analysis programs. In this final post of the series, we review projects...

Jul 11, 2025 | In the Community, OpenBehavior Team Update, Resources, Uncategorized, Video Analysis

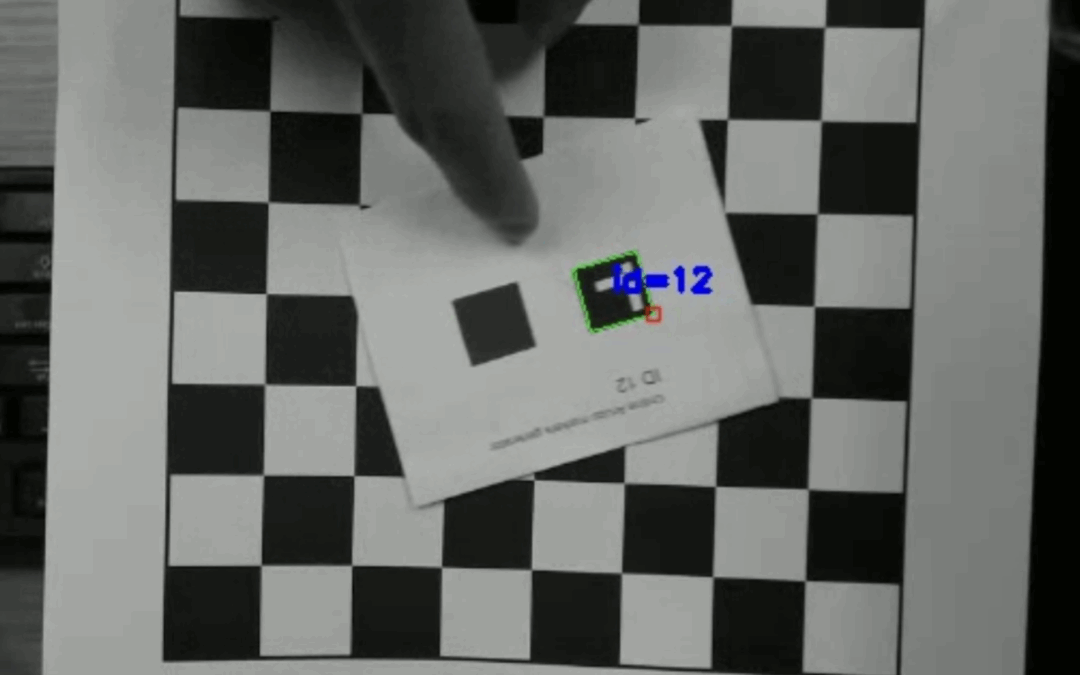

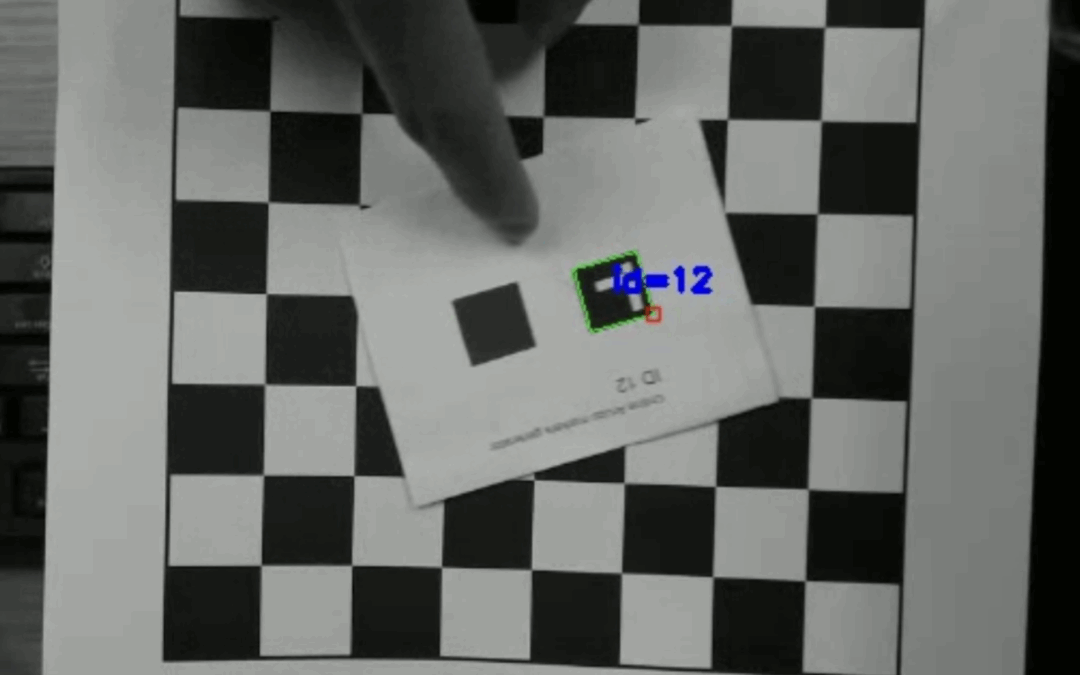

This week’s post was written by Ray Shen, an undergraduate student from the University of Maryland who is working with the OB team this summer. Real-Time ArUco Marker Detection in Bonsai-RX ArUco markers are fiducial markers widely used for camera pose...

Jun 20, 2025 | Arduino, In the Community, OpenBehavior Team Update, Resources, Uncategorized

Simplifying Video Analysis: An Arduino Trial Counter Many neuroscience labs record videos of animals performing behavioral tasks. When your video system is fully integrated and cameras are precisely synchronized with your behavioral control system, finding frames for...

Jun 13, 2025 | In the Community, OpenBehavior Team Update, Uncategorized

The OpenBehavior Project primarily focuses on tools for behavioral testing and data analysis. However, our database also features a growing number of valuable open-source tools for imaging, including microscopy, calcium imaging, optical sensors, and integrated...

Jun 6, 2025 | In the Community, OpenBehavior Team Update, Uncategorized

The OpenBehavior Project primarily focuses on tools for behavioral testing and data analysis. However, our database also features a growing number of valuable open-source tools for electrophysiology experiments that have been reported on our website over the past five...